The cost of eventual consistency

March 3, 2010 5 Comments

Amazon recently announced a set of new features (consistent read, conditional put and delete). You can read Werner’s and Jeff Barr’s post to learn more about the details of the features. On the surface, it seems like just another set of incremental features, but it is actually quite significant from a system design perspective. The CAP theorem states that a system can only attain two out of three properties: Consistency, Availability and Partition tolerance. In the past, Amazon has been preaching the eventual consistency model, where they give up consistency (C in CAP) in favor of availability (A in CAP ) and partition tolerance (P in CAP). The new features are actually on a different design point than all their offerings in the past, i.e., giving up availability in favor of consistency and partition tolerance.

Amazon recently announced a set of new features (consistent read, conditional put and delete). You can read Werner’s and Jeff Barr’s post to learn more about the details of the features. On the surface, it seems like just another set of incremental features, but it is actually quite significant from a system design perspective. The CAP theorem states that a system can only attain two out of three properties: Consistency, Availability and Partition tolerance. In the past, Amazon has been preaching the eventual consistency model, where they give up consistency (C in CAP) in favor of availability (A in CAP ) and partition tolerance (P in CAP). The new features are actually on a different design point than all their offerings in the past, i.e., giving up availability in favor of consistency and partition tolerance.

Since the features are at a totally different design point, they may have a very different performance characteristic. Werner, in his post, states that Consistent Read has “Higher read latency” and “Lower read throughput”. To see how different, we run a set of experiments in EC2. The results: there are no differences, both in terms of latency and throughput, that we could perceive. For details on our experiments, read on.

Latency

Single thread

We run several experiments to understand the latency differences. First, we run GetAttribute (both with and without consistency read) and PutAttribute (both with and without conditional put) on a single item and a single attribute sequentially in a single thread for 1,000 times, and then measure the average latency. For conditional PutAttribute, we first read the previous value and use it as a condition, then randomly generate a new value for the put request. The time reported for PutAttribute is only for the PutAttribute API call, which does not include the time to read the previous value. The average latency is:

GetAttribute 22ms

GetAttribute with ConsistentRead 23ms

PutAttribute 110ms

PutAttribute Conditional 118ms

The time difference between the two versions of GetAttribute and PutAttribute are small enough that they are essentially the same. Over many runs of the experiment, we have seen many cases where a consistent read GetAttribute or a conditional PutAttribute takes few milli-seconds longer.

Multiple threads

Accessing a single attribute sequentially, as in the last experiment, is far from stressing SimpleDB. We also run a set of experiments to see what happens when there are multiple parallel GetAttribute and PutAttribute. We use multiple threads on a m1.small EC2 instance and we vary the number of threads to increase the level of parallelism.

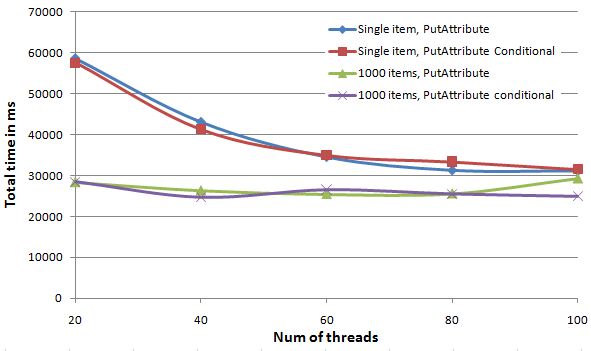

For PutAttribute, we run 10,000 requests and measure the overall time it takes for all threads to finish all requests. The results are shown in the following figure. There are 4 curves. The “Single item, PutAttribute” curve is writing a single attribute to a single item repeatedly. The “Single item, PutAttribute Conditional” curve is similar except that it invokes the conditional PutAttribute, where the condition is checking a fictitious attribute to be non-existent. We could not use a condition based on the attribute’s previous value, because with multiple threads, the PutAttribute call would almost always fail because other threads would have written over the previous value between the GetAttribute and PutAttribute call. The two “1000 items” curves are similar to their “Single item” cousin, except that we randomly choose 1 out of 1000 items to write to for each request. The goal is to spread out the workload.

There are two observations from the figure. First, the conditional version is almost identical to the normal eventual consistent version. The small differences between the two versions can be all attributed to the normal variation in accessing SimpleDB. Second, the single item PutAttribute takes longer to process, presumably because the load on the single item is much higher when all threads are accessing the same item at the same time. However, as we increase the parallelism, the longer latency could be effectively hidden by the multiple threads. At 100 threads, the “Single item” and “1000 items” results are almost identical.

If we increase the number of threads beyond 100, the overhead of thread scheduling starts to dominate. Even though not shown, we actually see the overall processing time increases as we increase the number of threads further.

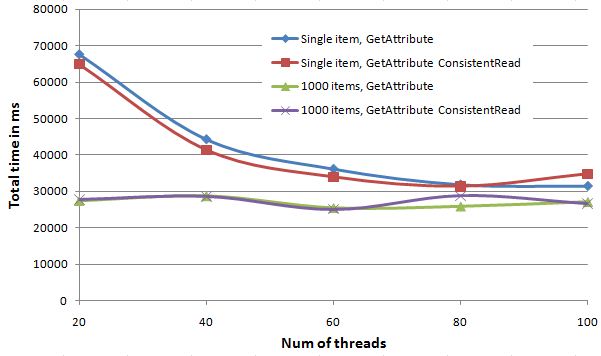

For GetAttribute, we also run 10,000 requests and measure the overall time. However, to avoid any caching effect, we have 5 threads running in the background constantly writing new values to the items. The following figure shows the results for GetAttributes.

From the figure, we can arrive at the two similar observations as the PutAttribute case. First, the ConsistentRead version is almost identical to the normal eventual consistent read. Again, the differences can all be attributed to the normal SimpleDB fluctuation. Second, GetAttribute on a single item takes longer because of the higher stress. Again, the latency could be effectively hidden with more threads.

Throughput

There is not an easier way to measure the sustainable throughput than jamming the system with a large number of requests, and that is exactly what we did. We used Cloud MapReduce to write the test cases, so that we can easily scale up to a large number of nodes. SimpleDB has a much smaller write throughput than a read throughput, so to jam SimpleDB, we run 100 threads of GetAttribute and 100 threads of PutAttribute simultaneously on each map task, where each map task runs on a separate m1.small EC2 instance. With 4 m1.small EC2 instances running in parallel, we can consistently overload SimpleDB with the following error message.

Response Status Code: 503

<Response><Errors><Error><Code>ServiceUnavailable</Code><Message>Service AmazonSimpleDB is currently unavailable. Please try again later</Message></Error></Errors><RequestID>613df115-aee4-f86f-7a22-9a94f9b1633c</RequestID></Response>

With 2 or 3 EC2 instances, we occasionally trigger the error message. It seems to depend on the load other people are putting on SimpleDB as well. Regardless, we are not able to perceive a difference between the conditional PutAttribute, ConsistentRead GetAttribute and their normal eventual consistent counterpart.

Conclusion

During the 3 days we performed our test, we are not able to observe a total system failure. There may be single node failures in the Amazon cluster, but we are not able to observe externally. From what we see, the strong consistent Get and Put behave exactly the same as an eventual consistent version, both in terms of latency and throughput. So, according to CAP theorem, did we just get consistency for free without sacrificing availability?